How 6 Words Almost Killed a $90 Billion Company

A secret dossier, a burning effigy, and a governance flaw.

On November 17, 2023, Sam Altman clicked a Google Meet link in a Las Vegas hotel room and learned he had five minutes before a blog post would announce his firing as CEO of an $86 billion company.

Microsoft, the company’s largest investor at $13 billion, got sixty seconds of warning.

The board’s entire justification fit into six words: “not consistently candid in his communications.”

What followed was the most unhinged five days in Silicon Valley history: a 52-page secret dossier sent via disappearing emails, a wooden effigy burned in a forest, 97% of employees threatening to quit, and a smoke machine setting off fire alarms at the victory party.

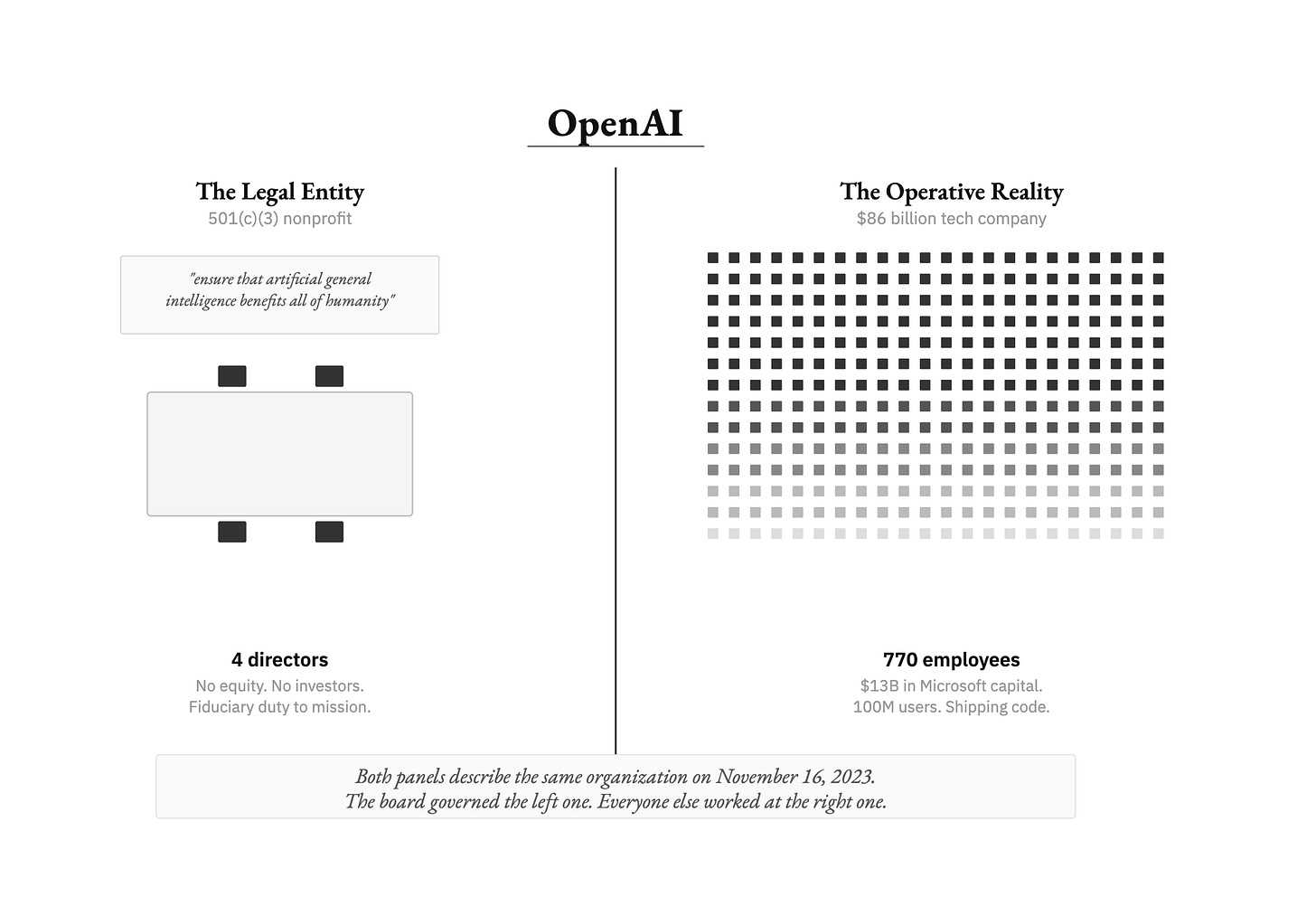

But the real story is what happens when the legal structure of a company and the lived reality of that company become two completely different things. Four people with zero equity tried to blow up a $90 billion enterprise over a weekend.

This crisis exposed a dynamic with implications far beyond tech: structural drift. It’s what happens when an organization’s formal governance framework stays fixed while the lived reality of the organization changes underneath it.

The charter stays the same. The bylaws stay the same. But the organization quietly becomes something else, and nobody updates the paperwork, because everyone implicitly agrees on what the organization actually is.

The formal structure becomes decorative. Then one day, someone tries to use it for its intended purpose, and the whole thing detonates.

That’s exactly what happened at OpenAI. The board tried to exercise the authority the charter gave them. They had the legal right. They had documented evidence. They may have been right on some of the merits. And they discovered that the authority existed only on paper, because the organization described in the charter and the organization experienced by 770 employees, $13 billion in Microsoft capital, and a hundred million users had become two entirely different things.

The question that matters is how the gap between charter and reality grew so wide that nobody noticed until it was too late.

The Structure That Made It Possible

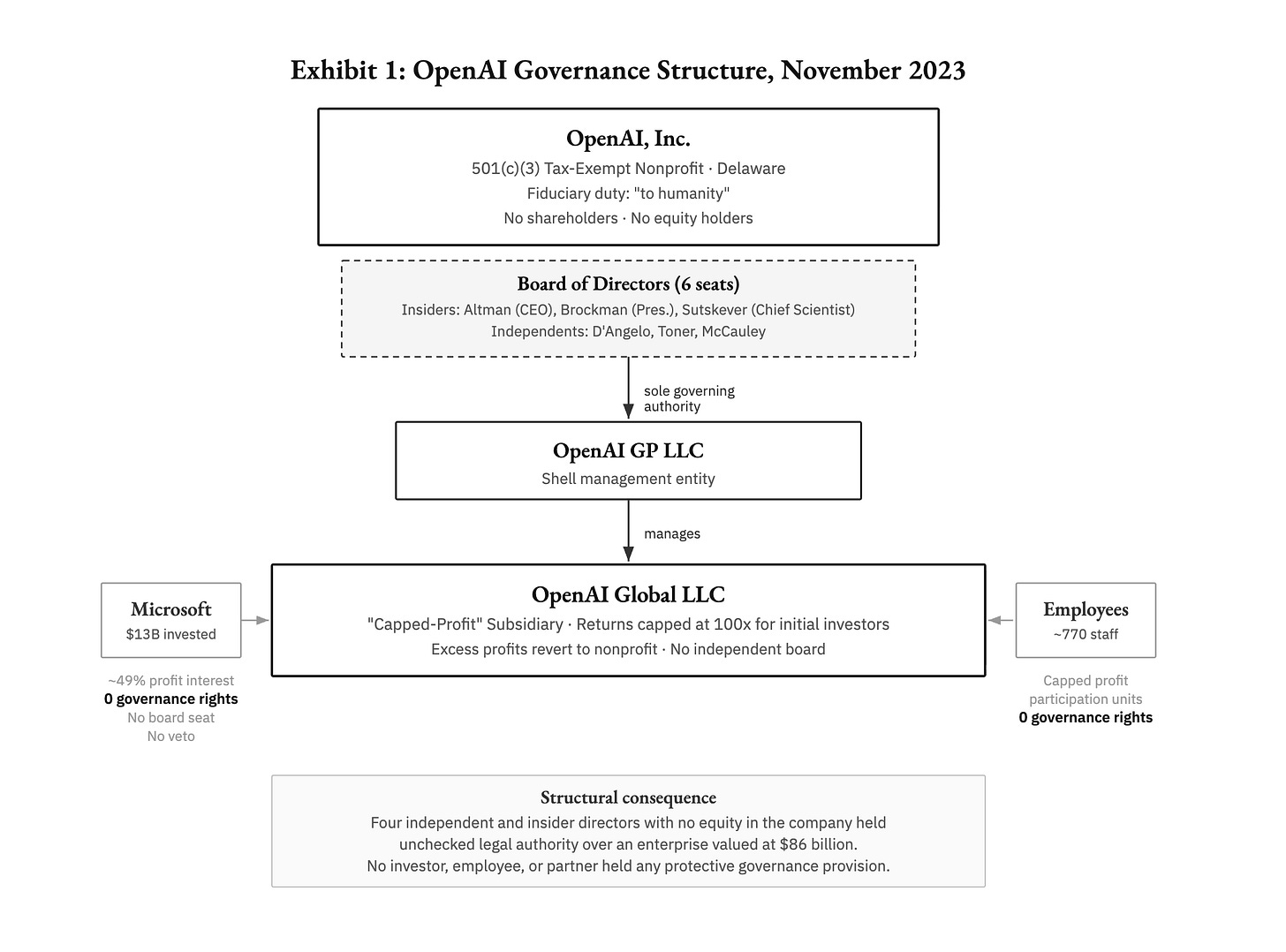

OpenAI was incorporated in December 2015 as a 501(c)(3) tax-exempt nonprofit in Delaware. Its founding donors, including Altman, Elon Musk, Reid Hoffman, and Peter Thiel, pledged $1 billion, though only about $130 million was actually collected.

The charter declared a fiduciary duty not to shareholders but “to humanity.” The mission: ensure artificial general intelligence benefits all of humanity.

By 2019, it became clear that donations couldn’t fund the billions needed to train frontier AI models. So the board created something baroque: a “capped-profit” subsidiary called OpenAI Global LLC, managed by the nonprofit through a shell entity. Investors and employees could earn returns, but those returns were capped at 100x for the first round, with anything beyond the cap flowing back to the nonprofit.

The operating agreement contained a sentence that still makes my jaw drop every time I read it: investors should view their money “in the spirit of a donation, with the understanding that it may be difficult to know what role money will play in a post-AGI world.”

The nonprofit’s six-person board was the supreme governing authority over everything. Microsoft held a roughly 49% profits interest but zero governance rights.

No investor had a board seat, a veto, or any protective provision. The board’s fiduciary duty ran to the charitable mission, not to profit generation.

This structure made perfect sense in 2019. It made progressively less sense every month after ChatGPT launched in November 2022 and reached a hundred million users in two months.

By late 2023, the structural drift had become a chasm. The board governed a nonprofit with a safety mission that happened to run a commercial subsidiary.

The employees, the investors, and Microsoft worked at an $86 billion technology company that happened to have a nonprofit parent. Both versions were internally coherent. Both were defensible on their own terms. They just couldn’t coexist in the same boardroom.

And the board that governed one of the fastest-growing companies in history? It included Adam D’Angelo, the Quora CEO whose AI platform Poe directly competed with OpenAI’s GPT Store, a conflict no one addressed. It included Helen Toner, a Georgetown academic who joined the board through effective altruist networks. It included Tasha McCauley, who co-founded Fellow Robots and taught robotics at Singularity University.

None of them had experience governing a company at this scale.

And it included Ilya Sutskever, OpenAI’s chief scientist. Sutskever had co-invented AlexNet under Geoffrey Hinton at the University of Toronto and catalyzed the deep learning revolution. He was OpenAI’s intellectual core.

He was also the person who, at a September 2022 leadership offsite at Tenaya Lodge in the Sierra Nevada, commissioned a wooden effigy from a local artist. It represented a “good and aligned AI” that turned out to be “lying and deceitful.”

Researchers in bathrobes formed a semicircle beneath ancient redwoods. Sutskever doused the effigy in lighter fluid and set it ablaze. They watched in silence. At the 2022 holiday party, he led employees chanting “Feel the AGI!”, a moment so memorable that staff created a custom Slack reaction emoji. Three employees told reporters he had started “acting like some sort of spiritual leader.”

These four people had the legal authority to fire Sam Altman with zero notice. That authority was about to collide with reality.

A Year of Waiting and a 52-Page Bomb

The coup did not happen overnight. In his sworn October 2025 deposition in the Musk v. Altman lawsuit, Sutskever revealed he had been contemplating Altman’s removal for at least a year before it happened.

The problem was board composition. With Reid Hoffman, Shivon Zilis, and Will Hurd on the board (all considered Altman-friendly), the votes weren’t there. But across 2023, all three departed: Hoffman over conflicts with his own AI investments, Zilis quietly, and Hurd for a quixotic presidential campaign. Their seats were never filled because the remaining directors couldn’t agree on replacements.

“I was waiting for a moment when the board dynamics would allow for Altman to be replaced,” Sutskever testified. “That the majority of the board is not obviously friendly with Sam.” The chief scientist of one of the most consequential organizations in the world spent a year watching board seats empty, waiting for the arithmetic to tip. This was patient.

In October 2023, the catalyst arrived. Sutskever and CTO Mira Murati approached the independent board members with what Toner described as “really serious” conversations.

They used the phrase “psychological abuse.” They said Altman cultivated “a toxic culture of lying.” Murati reportedly told the board: “Altman had a simple playbook: first, say whatever he needed to say to get you to do what he wanted, and second, if that didn’t work, undermine you or destroy your credibility.”

Sutskever added: “I don’t think Sam is the guy who should have the finger on the button for AGI.”

Sutskever compiled his evidence into a 52-page memo. Its opening line: “Sam exhibits a consistent pattern of lying, undermining his execs, and pitting his execs against one another.” He sent it only to the three independent directors using Gmail’s disappearing-message feature.

When asked in his deposition why he used self-destructing emails: “Because I was worried that those memos will somehow leak.”

When asked why he didn’t send it to Altman: “Because I felt that, had he become aware of these discussions, he would just find a way to make them disappear.”

Toner, in a May 2024 TED AI Show interview, laid out the specific instances behind the board’s six-word justification. Two stand out for their concreteness.

When ChatGPT launched in November 2022, the most consequential product launch in AI history (maybe in history, period), the board was not informed in advance: “We learned about ChatGPT on Twitter.”

And when OpenAI’s restrictive agreements threatening to claw back departing employees’ vested equity became public, Altman said he had not been aware of the specific provisions. Vox later obtained incorporation documents from April 2023, bearing his signature, that explicitly authorized them. Whether that reflected genuine unawareness of boilerplate language or something else, it became another data point in the board’s case.

Toner cited additional instances involving the OpenAI Startup Fund and internal safety processes. “We just couldn’t believe things that Sam was telling us,” she said. “And that’s a completely unworkable place to be in as a board.”

Here is the detail that keeps nagging at me. Much of the memo’s content came from a single source: Mira Murati. Sutskever admitted under oath that he never independently verified several key claims, including allegations that Altman had been “pushed out from Y Combinator for similar behaviors.”

When asked why he didn’t verify with Brockman directly: “It didn’t occur to me.” In hindsight, he conceded, “secondhand knowledge is an invitation for further investigation.”

This is where process collapsed.

Whatever the merits of the underlying concerns, a 52-page document built partly on unverified secondhand claims, distributed via disappearing messages, used to justify the termination of a CEO running an $86 billion company with no advance notice to investors, is not a process. It’s a conspiracy. And I don’t mean that pejoratively.

The board members genuinely believed they were protecting humanity. They were operating entirely within the version of OpenAI described in the charter: a nonprofit whose board had unchecked authority and a fiduciary duty to all of mankind.

Within that version, their actions were logical.

The problem was that almost nobody else inhabited that version anymore.

When De Jure Met De Facto

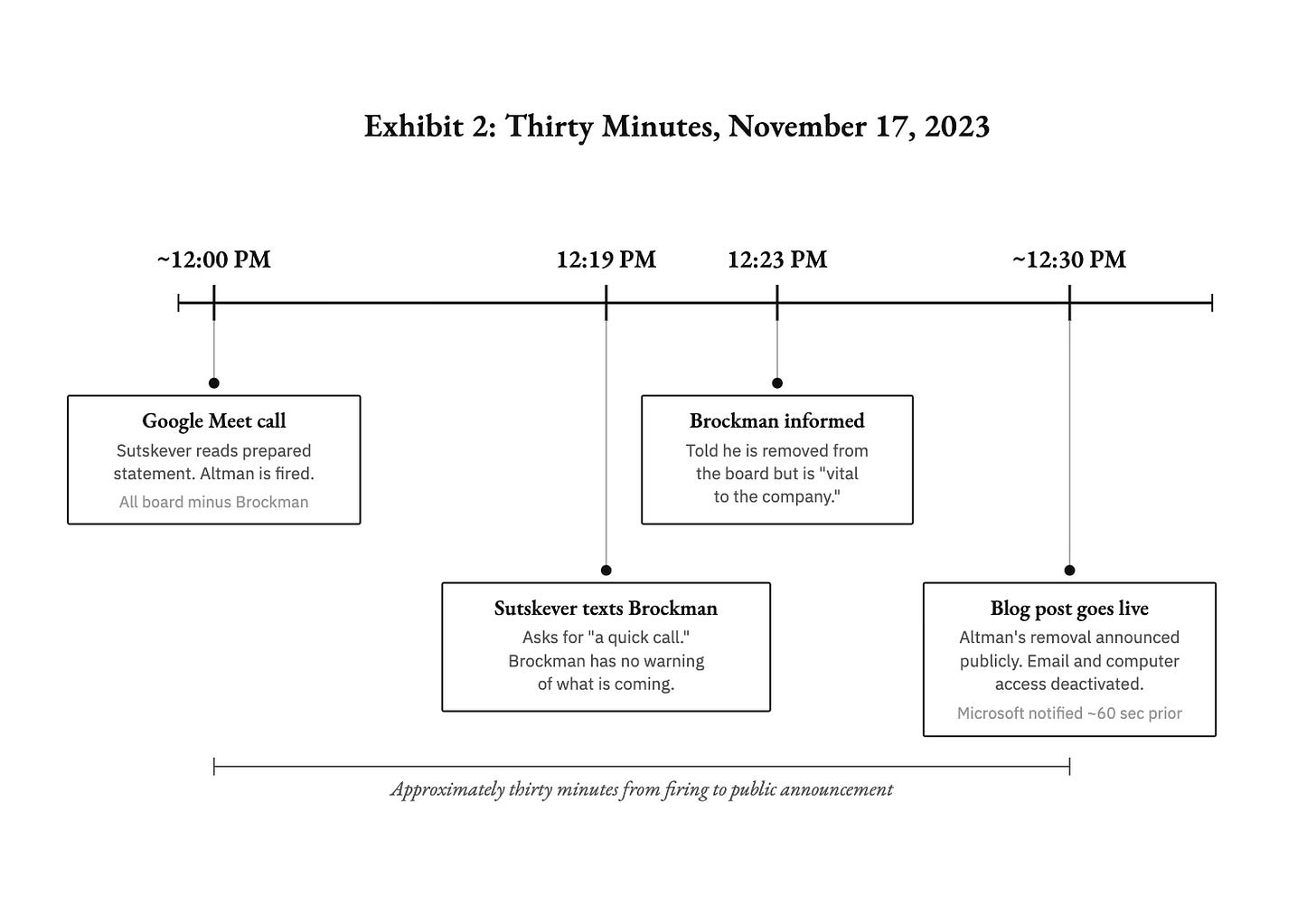

Thursday, November 16. The four dissenting board members held a furtive video call and voted to fire Altman. That evening, Murati was informed she would become interim CEO.

Friday, November 17, noon. Altman clicked the Google Meet link. Sutskever read a prepared statement. Altman recalled: “It was very strange. I couldn’t get any questions answered. I really did in that first moment think I was having some crazy vivid dream.” Nineteen minutes later, Brockman was told he was removed from the board but “vital to the company.” By 12:30 PM, the blog post was live. Altman’s email and computer access were immediately deactivated.

That evening, as he prepared to fly from Las Vegas back to San Francisco, Oliver Mulherin put his hands on Sam’s shoulders at the foot of the airplane stairs: “Sam, I’m with you 100 percent no matter what, but what the f*ck did you do?” Altman: “Gosh, no!” Oliver: “Okay, I’m good.” They boarded the plane.

Saturday, November 18. Altman had a call with two board members who floated his return. His first reaction: “Absolutely not.” His second: “I will come back if all of you resign right now.” He later called this “not a constructive thing” and said a more diplomatic response might have ended the crisis that morning.

That evening, Altman tweeted “i love the openai [sic] team so much,” and employees began quote-tweeting it with heart emojis en masse, a coordinated signal of loyalty, a headcount of who would follow him out the door. It was most of the company. The first tremor of de facto power making itself felt.

Sunday, November 19. Negotiations, mediated remotely by Satya Nadella, continued all day. The board agreed in principle to resign, then reversed course and installed Emmett Shear as interim CEO.

Shear, the former Twitch co-founder, was on paternity leave with a nine-month-old son. He estimated his personal probability of AI-caused human extinction at between 5% and 50%. He was reportedly a character in a Harry Potter fanfiction popular within the effective altruism community. He accepted after reflecting “for just a few hours.”

Meanwhile, the board had already been rejected by GitHub CEO Nat Friedman and Scale AI CEO Alex Wang. They contacted Anthropic CEO Dario Amodei about replacing Altman and proposed merging the two companies entirely. Toner was “the most supportive” of the Anthropic merger. Sutskever was “very unhappy about it.” Amodei declined both proposals.

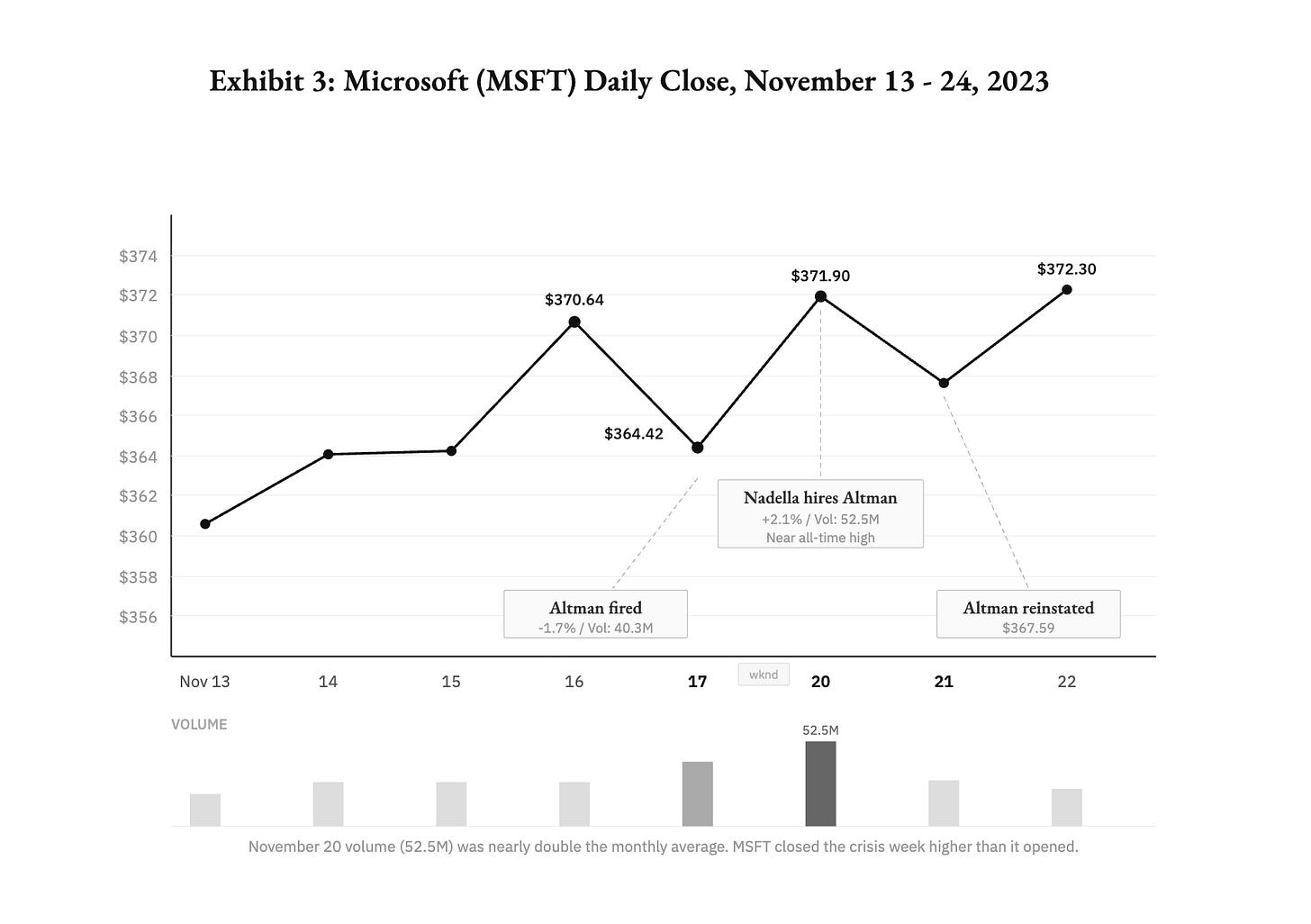

Then Nadella made the single most decisive move of the entire crisis. He publicly announced that Altman and Brockman would lead a new Microsoft AI research team, with positions available for every OpenAI employee at matched compensation. Microsoft calculated the cost of absorbing the entire team at approximately $25 billion.

Nadella later called it “in a world of bad choices... definitely not my preferred thing,” but “the worst outcome would have been all these people leave and they go to our competition.”

The announcement gave Altman leverage, gave employees a credible exit, and applied existential pressure on the board. Microsoft stock rose 2% to near all-time highs. The board, which had de jure authority, suddenly had no moves left against the de facto coalition assembling against it.

Monday, November 20. Altman was shaken awake by Oliver, still groggy from a sleeping pill he’d taken at 3 AM. “You need to check your phone.” Sutskever had posted his regret. The emotional turning point had come the day before.

According to the Wall Street Journal, Anna Brockman (Greg’s wife, whose wedding Sutskever had officiated in 2019 at the OpenAI offices, with a robotic hand serving as ring bearer) went to the offices and cried and pleaded with Sutskever to change his mind. The man who had married the couple had tried to destroy the company the groom co-founded. The weight of that broke something.

The structural drift had been invisible for years. One exchange during the crisis negotiations made it impossible to miss.

When OpenAI executives warned that Altman’s absence would destroy the company, and that this contradicted the board’s mission, Toner responded that destruction “could be consistent with the mission.” Even Sutskever testified he disagreed: “At that point in time, the answer was definitely ‘No’ for me.”

That single exchange captures the philosophical chasm at the heart of everything. To Toner, the mission was the load-bearing element. If the company had to be destroyed to protect it, so be it. To the 770 employees who built ChatGPT, the company was the mission.

Both positions were internally consistent. Both were, within their own framing, rational. They were just operating inside different versions of the same organization, and when those versions collided in that room, everyone present could finally see the gap that had been widening for years.

By Monday evening, 745 of approximately 770 employees (97%) had signed a letter threatening mass resignation. The signatories included Murati, COO Brad Lightcap, and, astonishingly, Sutskever himself.

Tuesday, November 21. Shear told the board he would resign, too, if a resolution wasn’t reached. By 10 PM, Altman was back as CEO with a new board chaired by Bret Taylor, alongside Larry Summers and Adam D’Angelo. Three of the four directors who had fired him were gone. At the offices, someone brought a smoke machine to the victory celebration. It triggered the fire alarm and dispatched two fire trucks.

I couldn’t make this up if I tried.

What the Collision Destroyed

The new board hired WilmerHale to investigate. The law firm reviewed 30,000 documents and conducted dozens of interviews. Its March 2024 conclusions threaded a diplomatic needle: the prior board “acted within its broad discretion” to fire Altman, but his “conduct did not mandate removal.”

The firing arose from “a breakdown in the relationship and loss of trust,” not from “concerns regarding product safety or security, the pace of development, OpenAI’s finances, or its statements to investors.”

Larry Summers reportedly told people privately that the investigation found “many instances of Altman saying different things to different people” but to a degree the new board decided “didn’t preclude him from continuing to run the company.”

The full report was never released. The dueling narratives hardened. Toner and McCauley, in a 2024 Economist op-ed, criticized the secrecy.

The aftermath reshaped OpenAI completely. By October 2025, the nonprofit had become the OpenAI Foundation, the for-profit had become a Public Benefit Corporation, and the 100x profit cap was eliminated entirely. Microsoft held a 27% equity stake. Altman, who had held zero equity during the crisis, was reportedly in discussions for a 7% stake. The valuation soared from $86 billion to $500 billion.

No employees actually left for Microsoft. Not one. The threat of 97% of the company walking out had been the ultimate negotiating lever. It worked without a single person following through.

The human cost ran in a different direction. Sutskever departed in May 2024 and founded Safe Superintelligence Inc., which raised $3 billion at a $32 billion valuation largely on the strength of his reputation.

Jan Leike, who co-led the Superalignment team, left for Anthropic, writing that “We finally reached a breaking point” Murati departed in September 2024 and founded Thinking Machines Lab, which reached a $12 billion valuation within months. Nearly half of all AGI safety researchers had left by August 2024.

The Superalignment team, Sutskever’s signature initiative that had been promised 20% of OpenAI’s compute, was dissolved entirely.

The four board members had the legal authority to fire Altman. They had documented evidence of what they genuinely believed was systematic dishonesty. They may have been right on the merits.

But they underestimated something fundamental: that de facto power in an organization doesn’t live in the charter. It lives in the 770 people who show up every day, the $13 billion in capital, and the hundred million users who depend on the product.

The nonprofit board had de jure authority over an $86 billion enterprise. The de facto power belonged to the employees, the investors, and Microsoft. It always had.

The Safety Mechanism That Ate Itself

Exercising the safety mechanism destroyed the safety mechanism.

The board members who cared most about keeping OpenAI accountable to its mission were removed. The Superalignment team was dissolved. The profit cap was eliminated. The nonprofit became a foundation with an equity stake. Every structural guardrail that the original charter had put in place to prevent exactly this outcome was dismantled in the aftermath of the one serious attempt to use them.

Toner and McCauley warned in their Economist op-ed that “self-governance cannot reliably withstand the pressure of profit incentives.” They were right, though the pressure didn’t come from investors or shareholders.

It came from 745 people who chose their CEO over their board, a partner willing to absorb the whole team for $25 billion, and a structure that was only as durable as the shared belief that sustained it.

That’s structural drift in its terminal phase. The charter described one organization. Reality had built another. When the charter tried to assert itself, reality won, and then reality rewrote the charter to make sure it could never happen again.

Governance documents describe an organization. But the organization people actually experience is built from accumulated practice, shared investment, and the interpretive frames that 770 people carry to work every morning. When those two versions diverge far enough, the document becomes decorative. The lived version becomes the real charter.

Every organization carries formal structures designed for a version of itself that may no longer exist.

Most of the time, the gap is invisible.

It becomes visible only when someone leans on the wall and discovers it’s no longer load-bearing.