He Drank Deadly Bacteria to Prove He Was Right

The economics of being right early

The question isn’t why Barry Marshall drank a petri dish of bacteria in 1984. The question is why he had to.

He’d already published papers showing Helicobacter pylori in the stomachs of ulcer patients. He’d already demonstrated the association.

The medical establishment looked at his data and shrugged.

“Interesting,” they said. “Not decisive.” Meanwhile, surgeons kept cutting vagus nerves, and pharmaceutical companies kept printing money on acid blockers.

Rather than fighting ignorance, Marshall was fighting an economy. Ulcers were less a disease, and more a business model, a career path, and a definition of what “good medicine” looked like.

Being right wouldn’t be enough.

To win, he had to make being wrong more expensive than changing one's mind.

The Shape of Ordinary Proof

In early July 1984, Marshall underwent an endoscopy to confirm he was negative for H. pylori, the bacterium he believed caused ulcers.

Three weeks later, he drank a suspension of the bacteria.

Five days after that, he felt bloating and fullness, his appetite dropped, and testing confirmed he had colonized his stomach and developed gastritis, the same infection believed to drive most peptic ulcers.

If you tell this as “one brave doctor proved a point,” it becomes medical folklore. If you tell it as an institutional story, the pattern generalizes: the system was structured so that ordinary proof could not land.

That is why he had to turn himself into a walking exhibit.

Marshall’s self-experiment was an assault on deniability. Peer-reviewed data fails in paradigm conflict because it leaves room for the incumbent frame to declare the anomaly irrelevant. “Contamination.” “Selection bias.” “Association, not causation.” “Commensal colonization.”

In a mature field, those words are tools for keeping the map intact. But if a healthy investigator demonstrates baseline negativity, then self-inoculates, then develops symptoms and shows colonization and inflammation on follow-up, the easy objections collapse.

In his Nobel lecture, Marshall explicitly positions the self-experiment as a response to a field that wasn’t treating his claim as a credible causal model.

Even that didn’t instantly flip the establishment.

The Economy of Ulcers

To see why Marshall’s evidence couldn’t land through normal channels, you have to understand what ulcers were before H. pylori.

Not the disease. The economy.

For much of the 20th century, peptic ulcer disease was treated as a chronic acid problem. Sometimes a stress problem. Often, a surgical problem. Vagotomy was a standard procedure. Surgery was serious medicine, and ulcers were a serious surgical domain.

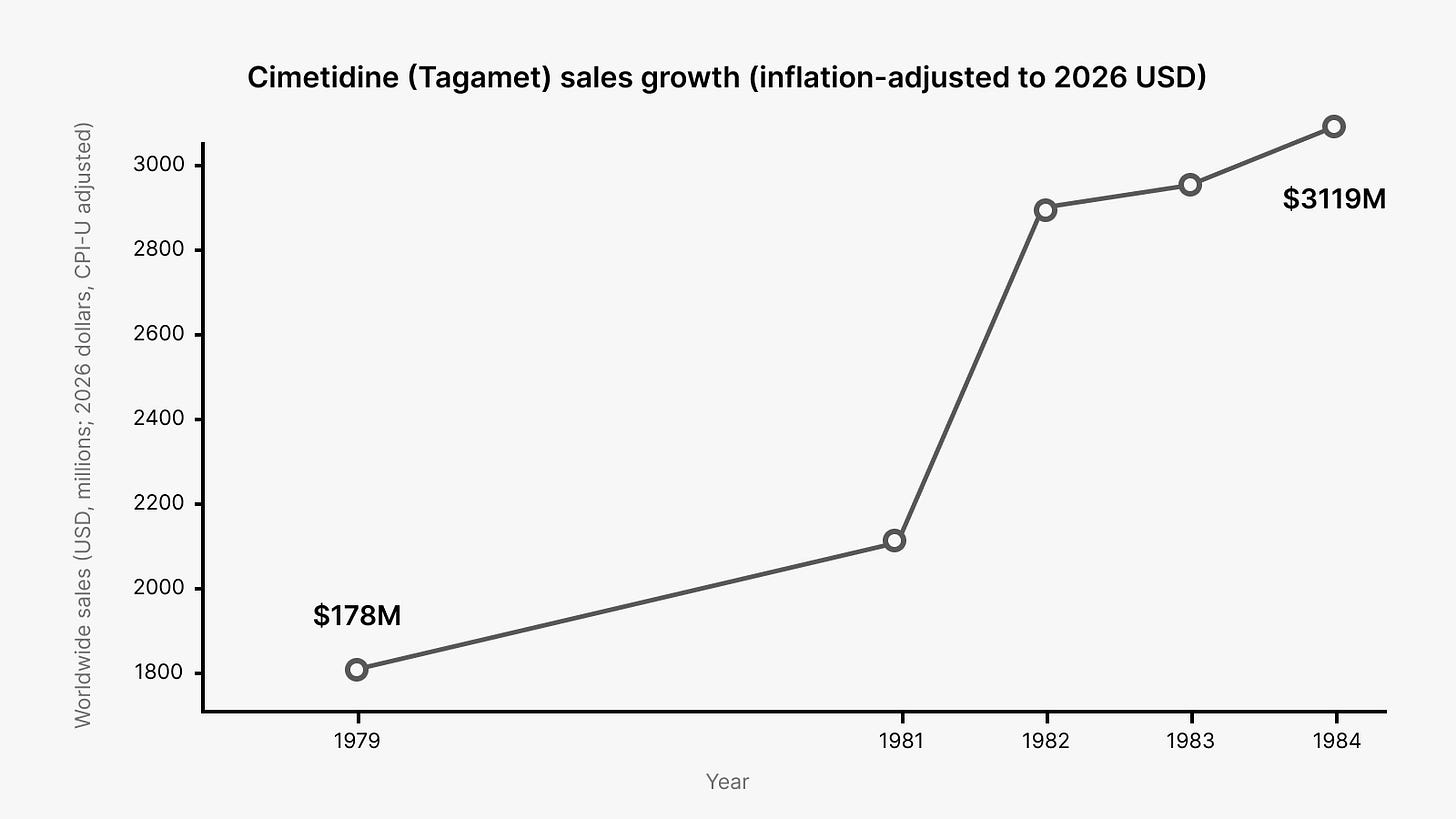

Then the drug era arrived and strengthened the acid paradigm rather than weakening it. Cimetidine hit the market in the mid-1970s and became foundational therapy. It worked. Patients felt better. The mechanism was elegant. The money was extraordinary.

Once a frame has a clean mechanism and a blockbuster treatment, it stops feeling like a hypothesis. It starts feeling like reality itself.

Against that backdrop, Warren and Marshall’s claim that ulcers were tied to a bacterial infection sounded like a category error.

The stomach was supposed to be a hostile, acidic environment where bacteria couldn’t meaningfully persist. The bacteria model implied that a large fraction of ulcer care had been organized around the wrong kind of problem entirely.

They published early signals anyway. In 1983, they reported unidentified curved bacilli on gastric epithelium in active chronic gastritis. In 1984, they reported that such bacteria were present in most patients with active chronic gastritis, duodenal ulcer, or gastric ulcer, suggesting an etiologic role.

Those papers were asking colleagues to reclassify the disease. That’s very expensive.

A worldview employs people. It funds departments. It creates “serious” specialties. It produces prestige.

When ulcers are treated as an acid disorder, a certain kind of competence is rewarded: careful titration, elegant pharmacology, sophisticated surgery for refractory cases.

When ulcers are treated as an infection, the center of gravity moves. The old work doesn’t become worthless, but much of it becomes less central, less heroic, and in some cases less necessary.

This is why early rejection tends to be framed as epistemic but driven by incentives and identity.

People don’t experience it as “I am defending my status.” They experience it as “this is not how reality works,” because their professional self is built inside that particular description of reality.

Why They Can’t Switch Sides

A paradigm is a massive machine for manufacturing what you might call symbolic capital: the accumulated expectation that listening to certain people will save time, reduce risk, and prevent embarrassment.

It makes the words of skeptics sound half-believed. Without it, even correct claims must be over-proven and over-proven again.

A significant portion of gastroenterology’s symbolic capital had been accumulated under the acid and stress worldview. If the bacterial story wins, the old “good judgment” gets repriced. People who were cautious within the old map become wrong within the new map. That feels like theft, because their status was earned under the prior rules.

This explains a fact that looks paradoxical until you see the structure: even people who privately suspect you are right may still oppose you publicly. Public adoption requires them to defect from the coalition that grants them legitimacy before the new coalition is stable enough to protect them.

A paradigm is a coordination device. It tells people which facts matter, which risks are tolerable, and what counts as a responsible response. Organizations select for plausibility before accuracy, because plausibility allows coordinated action without embarrassment.

When you threaten the paradigm, you’re threatening the coordination mechanism, not merely a set of beliefs.

The Semmelweis Lesson

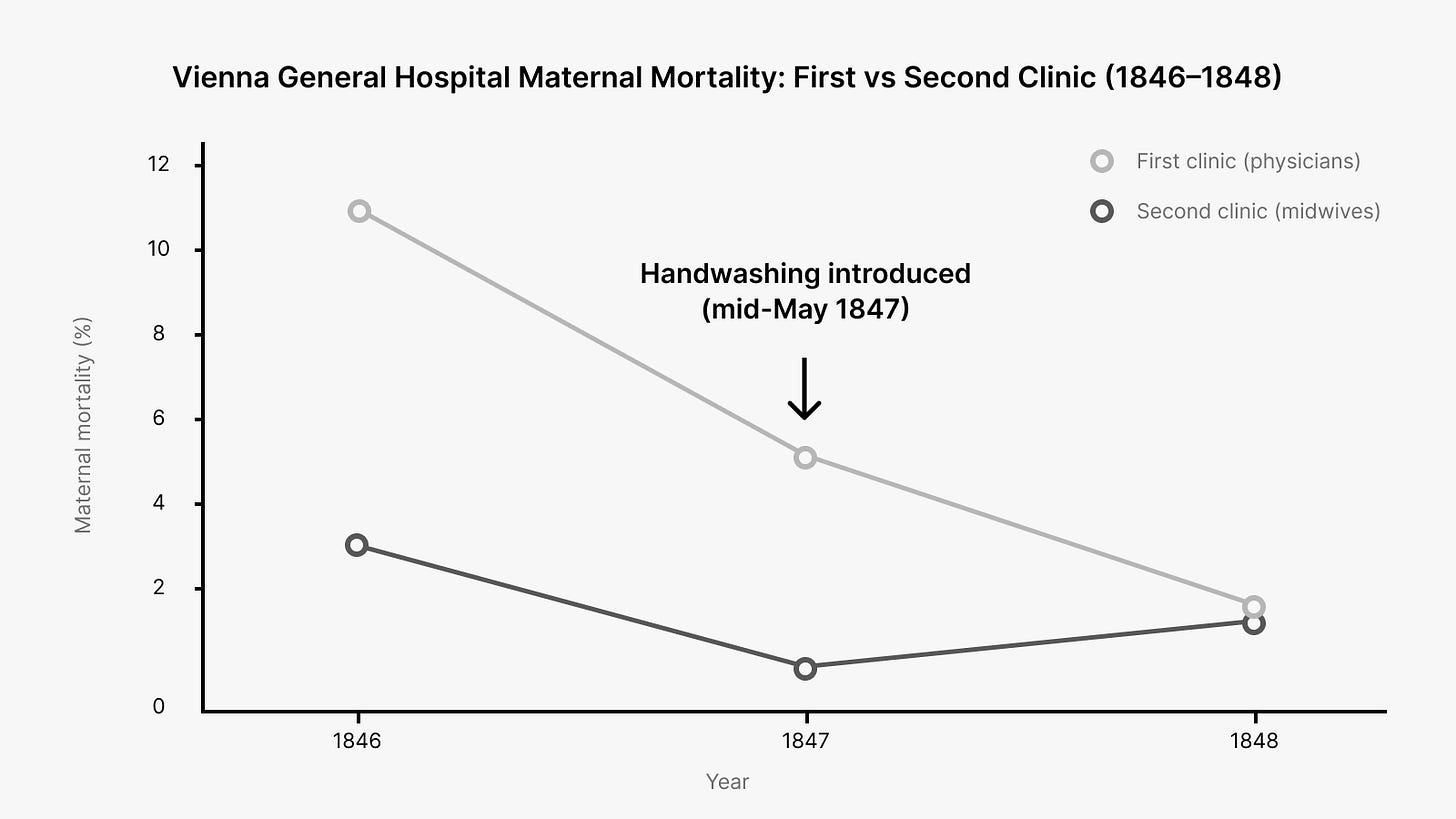

In the 1840s, at Vienna General Hospital, Ignaz Semmelweis observed that maternal mortality was far higher in the doctors’ clinic than in the midwives’ clinic.

He traced the difference to doctors moving from autopsies to deliveries without washing their hands.

When he instituted mandatory handwashing with chlorinated lime solution, mortality dropped from mid-teens percentages to below 2% within months.

If you read this through a modern germ-theory lens, the refusal to adopt his method looks insane. If you read it through the institutional lens of the time, it looks grim but coherent.

Semmelweis could show an intervention worked. He could not supply a mechanism the establishment recognized as real. Germ theory wasn’t installed yet. “Cadaverous particles” sounded like mysticism.

Most importantly, his conclusion implicated his colleagues. The new practice didn’t merely say “we can do better.” It said “your ordinary work has been killing mothers.”

Semmelweis was asking colleagues to accept that the defining ritual of their profession was a vector of death. Far beyond a technical correction, that’s a demand for public self-indictment.

Semmelweis is less a story about arrogance and more a story about how paradigms defend their moral order.

The old frame preserved innocence. The new frame distributed guilt.

What Marshall Understood

Marshall did something most “right early” people refuse to do. He paid the cost personally to change the cost structure for everyone else.

He didn’t just submit more papers. He constructed a demonstration that moved the argument from “interpret this dataset” to “watch this happen.” That shift matters because it changes what kind of courage is required of the adopter.

His self-experiment was a piece of borrowed cognition: a pre-assembled sequence that others could deploy without having to fight the full battle themselves. Baseline negativity, ingestion, symptoms, colonization, cure. It condensed an abstract causal argument into a narrative that was easy to repeat.

Narrative functions as uncertainty-reduction technology. Frames spread when they provide repeatable justification. Marshall’s demonstration gave the field something they could use.

Semmelweis couldn’t do that.

He lived before the mechanism that would let others narrate his results without the stigma of superstition or accusation. He could mandate practice locally. He couldn’t furnish a grammar that let physicians adopt it without feeling accused or irrational.

By February 1994, the NIH Consensus Development Conference recommended antimicrobial therapy to eradicate H. pylori.

That’s a full decade after Marshall’s self-experiment. The new model had to become official before it became normal.

Once the consensus statement existed, adoption no longer required personal heresy and became professional compliance.

Marshall and Robin Warren received the Nobel Prize in 2005. The Nobel framing treats their work as transforming ulcer disease from chronic recurrence to a condition that can be cured.

More than a scientific conclusion, it became a reallocation of what counts as legitimate medicine in that domain.

The Cost of Being Right Early

Being right early means you’re fighting a disease model and a prestige model at the same time. You’re asking for admissions that can feel morally catastrophic. You’re threatening the coordination mechanism that tells everyone what “responsible” action looks like.

Cynics will cite the obstacle as ignorance or corruption, but it (usually) isn’t. The obstacle is that your audience doesn’t yet have a script that makes adopting your model feel safe. They can’t use your evidence without a grammar that lets them speak it.

If you’re sitting on evidence that your institution isn’t ready to hear, the question isn’t whether you’re right. The question is whether you can make your map easier to adopt than their map is to defend.

Don’t fight for correctness. Fight to make being wrong more expensive than changing their minds.